“Photos are the atomic unit of social platforms,” asserted Om Malik, writing last December on the “visual Web.” “Photos and visuals are the common language of the Internet.”

Now personally, I see text as communicating orders of magnitude more information than photos, whether via social platforms or across the Web or the rest of the Net, but there’s no disputing visuals’ immediacy and emotional impact. That’s why, when we look at social analytics broadly, and in close-focus at sentiment analysis — at technologies that discern and decipher opinion, emotion, and intent in data big and small — we have to look at the use and sense of photos and visuals.

Francesco D’Orazio, chief innovation officer at UK agency FACE, vice president of product at FACE spin-off Pulsar, and co-founder of the Visual Social Media Lab, has been doing just that. That last affiliation is quite interesting. The lab “brings together a group of interdisciplinary researchers interested in analysing social media images. This involves expertise from media & communication studies, art history & visual culture, software studies, sociology, computer & information science as well as expertise from industry.”

Do you really need laundry-list of competencies to fully grasp the import of visual social media? Maybe so. But maybe, all the same, we can get a sense of image understanding — of techniques that uncover visuals’ content, meaning, and emotion — in just a few minutes. Francesco D’Orazio — Fran — is up to the challenge. He’ll be presenting on Analysing Images in Social Media in just a few days (from this writing) at the Sentiment Analysis Symposium, a conference I organize, taking place July 15-16 in New York. He’ll also participate on a Research Frontiers panel alongside Gwen Littlewort, senior data scientist at Emotient, a firm that applies facial coding for emotion analysis, and Robert Dale, a recovering academic who is expert in natural language generation (NLG) and co-founder of NLG firm Arria.

And Fran has gamely taken a shot at a set of interview question I posed to him. Here, then, (complete with British spellings,) is Francesco D’Orazio’s explanation how —

How to Machine-Learn Meaning in a Visual Social World

Seth Grimes> Let’s jump into the deep end. You have written, “Images are way more complex cultural artifacts than words. Their semiotic complexity makes them way trickier to study than words and without proper qualitative understanding they can prove very misleading.” How does one gain proper qualitative understanding of an image, say some random photo that’s posted to Instagram or Twitter? And by “one,” I mean someone other than the person the image was intended for. I mean, for instance, a brand or an agency such as FACE.

Francesco D’Orazio> Images are fundamental to understand social media. The social platforms are really fulfilling their potential, not when they’re simply providing a forum for discussion but when they manage to offer a window into someone else’s life. The discussion is interesting, but it’s the idea of the window that keeps us coming back for more. That’s why image analyses are a key angle to study of social media and human behaviour (and to understand why people are buying Kim Kardashian’s book of selfies, aptly named “Selfish”).

There are a number of frameworks you can use to analyse images qualitatively, sometimes in combination, from Iconography to Visual Culture, Visual Ethnography, Semiotics, and Content Analysis. At FACE, qualitative image analysis usually happens within a visual ethnography or content analysis framework, depending on whether we’re analysing the behaviours in a specific research community or a phenomenon taking place in social media.

Of course, the new availability of large bodies of images questions the fitness of these methods to make sense of the visual social media landscape of today. But qualitative methods have an advantage: they help you understand the context of an image better than any algorithm does. And by context I mean what’s around the image, who’s the author, what is the mode and site of production, who’s the audience of the image, what’s the main narrative and what else is there around that main narrative, what does the image itself tell me about the author, but also, and fundamentally, who’s sharing this image, when and after what, how is the image circulating, what networks are being created around it how is the meaning of the image mutative as it spreads to new audiences etc. etc.

Paradoxically images are literally in your face, but they are way less explicit than text. They represent the world at a much higher definition, which most of the times means they’re packing multiple threads of meaning which can or cannot be intertwined to the main narrative. Humans are really good at dealing with that mess selectively, iteratively, hierarchically, and very quickly because we’re good at story-making. We’re good at making assumptions and infer things. We’re good at prioritising and reduce complexity because we’re not specialised. We can build a whole story behind an image without necessarily having all the information we need to come to a conclusion but with the same degree of accuracy.

Seth> You refer to semiotics. What’s semiotics, anyway? Or more to the point, what good is semiotics to an insights professional? After all, “What’s in a name? that which we call a rose /By any other name would smell as sweet.”

Francesco> The text of an image expands way beyond the image itself. Professor Gillian Rose frames the issue nicely by studying an image in 4 contexts: the site of production, the site of the image, the site of audiencing and the site of circulation.

In that sense, semiotics is a compromise and has to be used in combination with more holistic methods. But semiotics is also essential to break down the image you’re analysing into codes and systems of codes that carry meaning. And if you think about it, semiotics is the closest thing we have in qualitative methods to the way machine learning works: extracting features from an image and then studying the occurrence and co-occurrence of those features in order to formulate a prediction, or a guess to put it bluntly.

Could you please sketch the interesting technologies and techniques available today, or emerging, for image analysis?

There are many methods and techniques currently used to analyse images and they serve hundreds of use cases. You can generally split these methods between two types: image analysis/processing and machine learning.

Say you want to find pictures of Coca Cola on Instagram and you didn’t have anything other than images to analyse. Image analysis would focus on breaking down the images into fundamental components (edges, shapes, colors etc.) in order to perform statistical analysis on their occurrence and based on that make a decision on whether each image contains a can of Coke. Machine learning instead would focus on building a model from example images that have been marked as containing a can of Coke. Based on that model, ML would guess whether the image contains a can of Coke or not, as an alternative to following static program instructions. Machine learning is pretty much the only effective route when programming explicit algorithms is not feasible because, for example, you don’t know how the can of Coke is going to be photographed. You don’t know what it is going to end up looking like so you can’t pre-determine the set of features necessary to identify it. Which is also why machine learning (and deep learning) has become the preferred option when it comes to analysing user generated content, specifically images.

So it really depends on the complexity of the question you’re trying to answer. Generally speaking the best outcomes are achieved when you integrate image analysis/processing for feature extraction and machine learning for pattern recognition.

One of the most interesting machine learning (deep learning) techniques for analysing images is CNN (convolutional neural networks). You might have come across the Google Research robot dreams (or nightmares) recently. [The Guardian reports, “Yes, androids do dream of electric sheep.”] They iteratively look at an image in small portions, replicating the idea of receptive fields in human vision. These networks have proven to be amazing at object recognition and are being deployed in real world applications already.

We use CNNs in Pulsar for detecting the topic of an image by way of understanding which objects or entities we can identify in that image. We then cluster the semantic tags to understand what configuration of subjects, entities, and locations appear in images about a specific brand, subject, etc. Having a set of topics attached to an image means you can explore, filter and mine the visual content more effectively. So for example, if you are an ad agency and want to know where your client’s rum brand is consumed the most. You want to set your next ad in a situation that’s relevant to your audience. You can use this functionality to quantitatively assess where most pictures of people drinking rum are set on the beach, at house parties, or at concerts, and involving what types of personas, situations, etc., etc. A bit like a statistical mood-board. We’re working with AlchemyAPI on this and it’s coming to Pulsar in September 2015.

But topic extraction is just one of the visual research use cases we’re working on. We’re planning the release of Pulsar Vision, a suite of 6 different tools for visual analysis within Pulsar ranging from extracting text from an image, identifying the most representative image in a news article, blog post or forum thread, face detection, similarity clustering, and contextual analysis. This last one is one of the most challenging. It involves triangulating the information contained in the image with the information we can extract from the caption and the information we can infer from the profile of the author to offer more context to the content that’s being analysed (brand recognition + situation identification + author demographic), e.g., when do which audiences consume what kind of drink in which situation?

In that Q1 quotation above, you contrast the semiotic complexity of words and of images. But isn’t the answer, analyze both? Analyze all salient available data, preferably jointly or at least with some form of cross-validation?

Whenever you can, absolutely yes. The challenge is how you bring the various kinds of data together to support the analyst to make inferences and come up with new research hypothesis based on the combination of all the streams. At the moment we’re looking at a way of combining author, caption, engagement and image data into a coherent model capable of suggesting for example Personas, so you can segment your audience based on a mix of behavioural and demographics traits.

You started out as a researcher and joined an agency. Now you’re also a product guy. Compare and contrast the roles. What does it take to be good at each, and at the intersection of the three?

I guess research and product have always been there in parallel. I started as a social scientist focussed on online communication and then specialised in immersive media, which is what led me to study the social web. I started doing hands-on research on social media in 1999 when I met a brilliant social psychology professor who got me into content analysis for digital media, at the time mostly blogs, forums and BBs. Back then we were mostly interested in studying rumours and how they spread online. But then after my Ph.D., I left academia to found a social innovation startup and that’s when I got into product design, user experience, and product management. When I decided it was time to move on from my second startup and joined FACE, I saw the opportunity to bring together all the things I had done until then — social science, social media, product design, and information design — and Pulsar was born.

What I like about being a product person, as opposed to working in research for an agency, is that you define your agenda. There’s a stronger narrative to what you do as opposed to jumping from one project to the other based on someone else’s agenda.

But having spent a long time doing hands on research and dealing with clients and other “users” like me put me in a great position to design a research product, which is what Pulsar ultimately is.

Other than knowing your user really well, and being one yourself, I think being good at product means constantly cultivating, questioning, and shaping the vision of the industry you’re in, while at the same time being extremely attentive to the details of the execution of your product roadmap. Ideas are cheap and can be easily copied. You make the real difference when you execute them well.

Why did the agency you work for, FACE, find it necessary or desirable to create a proprietary social intelligence tool, namely Pulsar? None of the dozens tools on the market are satisfactory?

There are hundreds of tools that do some sort of social intelligence. At the time of studying the feasibility of Pulsar, I counted around 450 tools including free, premium, and enterprise software. But they all shared the same approach. They were looking at social media data as quantitative data, so they were effectively analysing social media in the same way that Google Analytics analyses website clicks. The problem with that approach is that it throws away 80% of the value of social data, which is qualitative. Social data is qualitative data on a quantitative scale, not quantitative data, so we need tools to be able to mine the data accordingly.

The other big gap in the market we spotted was research. Most of the tools around were also fairly top line and designed for a very basic PR use case. No one was really catering for the research use case — audience insights, innovation, brand strategy, etc. Coming from a research consultancy, we felt we had a lot to say in that respect so we went for it.

Please tell us about a job that Pulsar did really well, that other tools would have been hard-pressed to handle. Extra points if you can provide a data viz to augment your story.

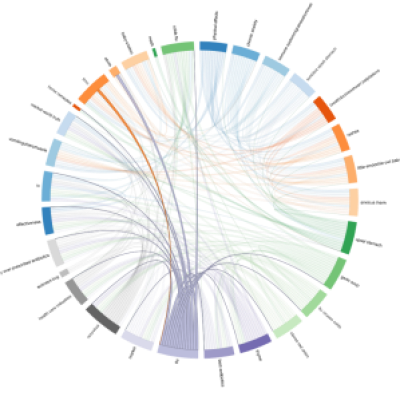

I think Pulsar introduced unprecedented granularity and flexibility in exploring the data (e.g. better filters, more data enrichments); a solid research framework on top of the data such as new ways of sampling social data by topic, audience, content, or the ability to perform discourse analysis to spot conversational patterns (attached visual on what remedies British people discuss when talking about flu); a great emphasis on interactive data visualisation to make data mining experience fast, iterative, and intuitive; and generally a user experience designed to make research with big data easier and accessible.

What does Pulsar not do (well), that you’re working to make it do (better)?

We always saw Pulsar as a real-time audience feedback machine, something that you peek into to learn what your audience thinks, does, and looks like. Social data is just the beginning of the journey. The way people use it is changing so we’re working on integrating data sources beyond social media such as Google Analytics, Google Trends, sales data, stock price trends, and others. The pilots we have run clearly show that there’s a lot of value in connecting those datasets.

Human-content analysis is also still not as advanced as I’d like it to be on the platform. We integrate Crowdflower and Amazon Mechanical Turk. You can create your own taxonomies, tag content, and manipulate and visualise the data based on your own frameworks, but there’s more we could do around sorting and ranking which are key tasks for anyone doing content analysis.

I wish we had been faster at developing both sides of the platform but if there’s one thing I’ve learned in 10 years of building digital products is that you don’t want to be too early at the party. It just ends up being very expensive (and awkward).

You’ll be speaking at the Sentiment Analysis Symposium on Analysing Images in Social Media. What one other SAS15 talk, not your own, are you looking forward to?

Definitely Emojineering @ Instagram by Thomas Dimson!

Thanks Fran!😸😸